Applying the learning algorithm to a single-layer perceptron

Explore the math of applying the learning algorithm to a single layer perceptron

This article is part of a series:

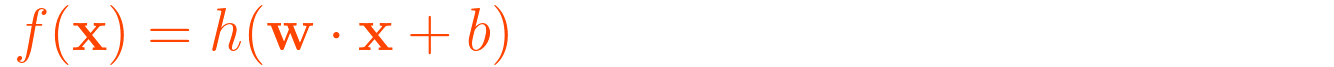

This is the perceptron algorithm we used in the first article with a static bias b:

To apply the learning algorithm, we need to treat the bias b as an additional weight.

In this way we include the bias b in the learning algorithm and we don’t have to assign a value manually.

Since w ⋅ x + b = (w, b) ⋅ (x, 1) we can treat the bias b just as an additional weight.

So instead of only two weights and two inputs, we add an additional weight that represents b and an additional input which always will be 1.

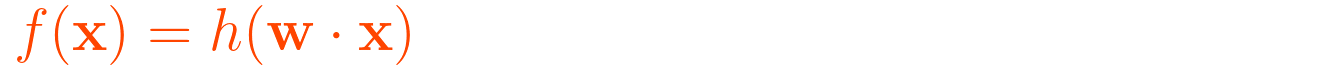

Now, we have the following equation:

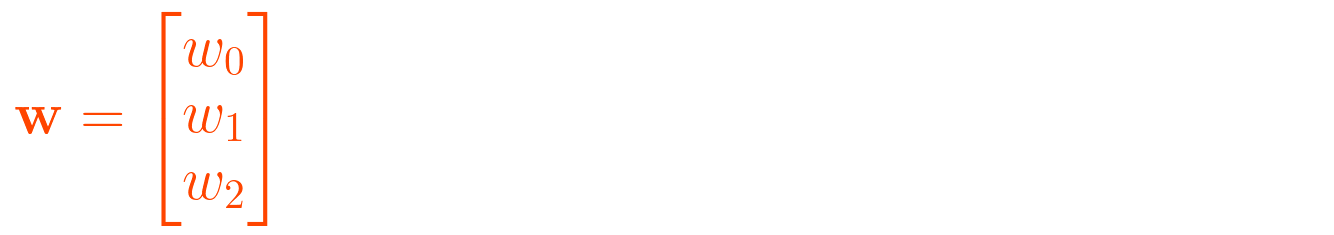

Where:

or

x is now:

The first input x0 will always be 1, w0 is now effectively the bias b.

The training data

In order to train our single-layer perceptron we need to understand the structure of our training data.

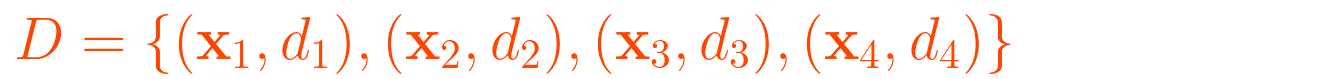

D is the training set of s samples:

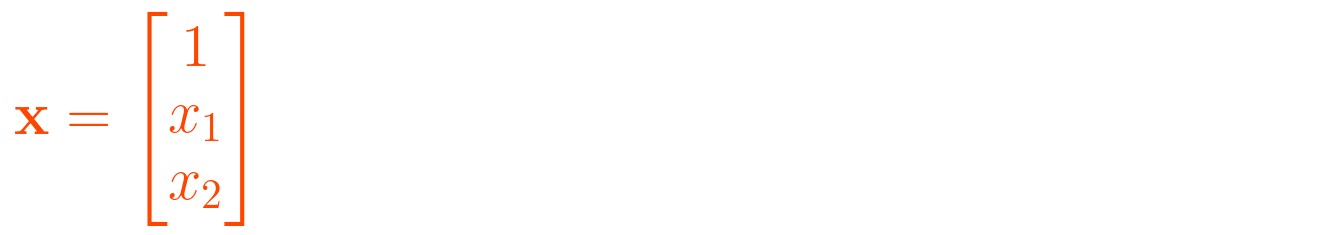

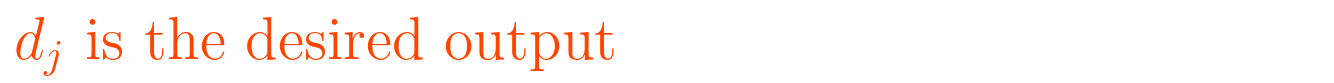

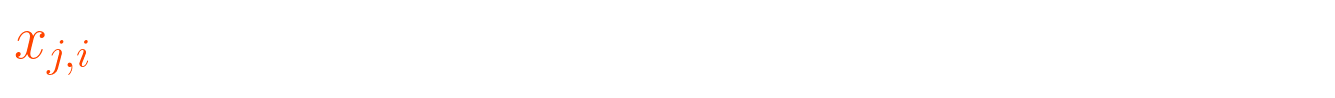

Where:

To access the value of the i-th feature of the j-th training input vector:

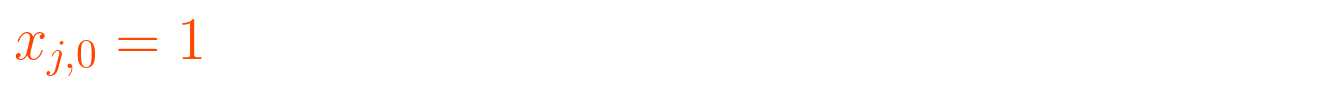

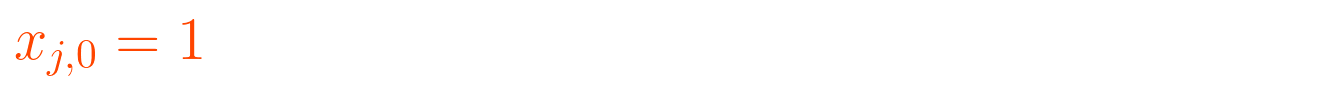

The 1st feature of the j-th training input vector is always 1:

Again, because of that, w0 effectively is the bias that we use instead of the constant bias b.

The weights w are accessible by i which is the weight of the i-th input x:

To show the time-dependence we will use:

Knowing the structure of the training data D, we can now assign the inputs x and the desired outputs d which are representing the OR operator:

So our training data D is:

and

I will initialize the weights with the following values (they also could be initialized with random values):

Calculating the error

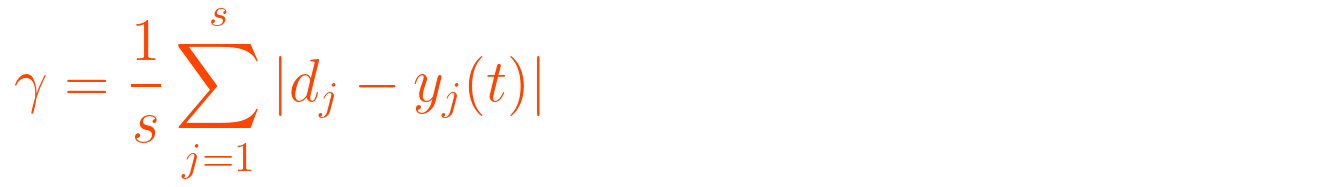

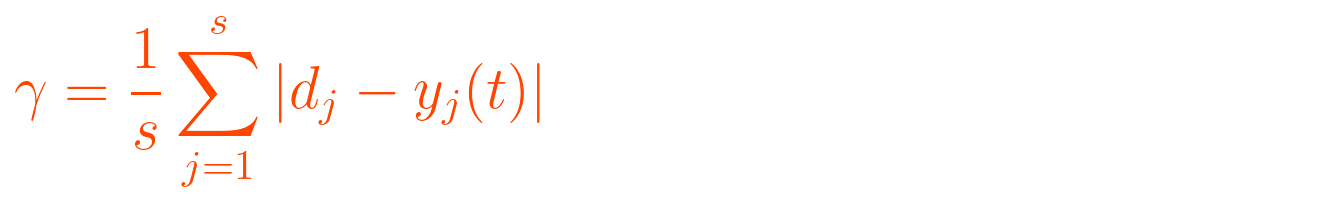

The learning algorithm has two steps that can be performed by predetermined number of iterations or until the error is under a user-specified threshhold γ that can be calculated with this formula:

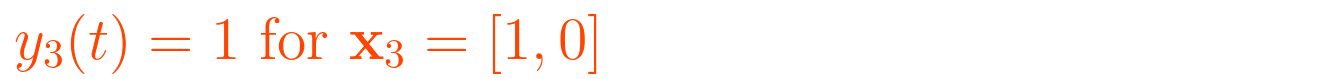

Let’s say we have the following inputs and outputs where y is the output of the perceptron at the given time t:

Obviously, the forth output is wrong and the others are correct.

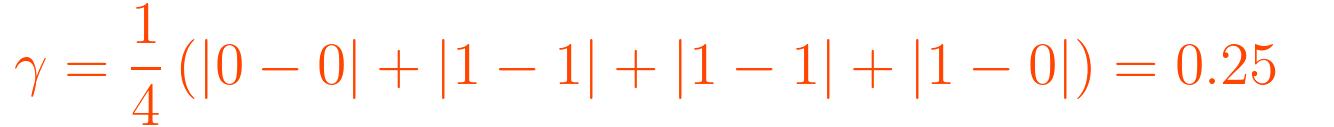

When we plug the values in the error function we get the following value for γ:

We will get the errors (γ or gamma) as a percentage.

In this case the quarter of the sum of the absolute values of the desired outputs d subtracted by predicted outputs y.

That means we have an error rate of 25%. In our case we want γ to be 0. So we would need to repeat the learning algorithm until γ = 0.

Steps of the learning algorithm

For each example in the training set D we need to perform the following two steps:

Calculate the output:

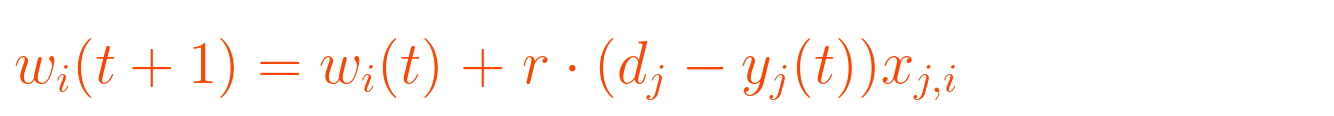

Update the weights:

Calculate the iteration error:

When the error γ > 0 go to step 1.

Keep in mind that this is the threshold for our use case and a different error threshold can be specified by user or the requirements of the application. In our case we want it to be 0.

So, knowing all of this we can finally start to do the math for the first epoch.

The calculation

Let’s summarize what we have.

These are the features and desired outputs of the OR operator:

We do also know that the first feature of our training vectors is always 1:

So this is our training set D:

Our weights w:

The learning rate r, which I will set to 0.1:

And we start with time t = 0:

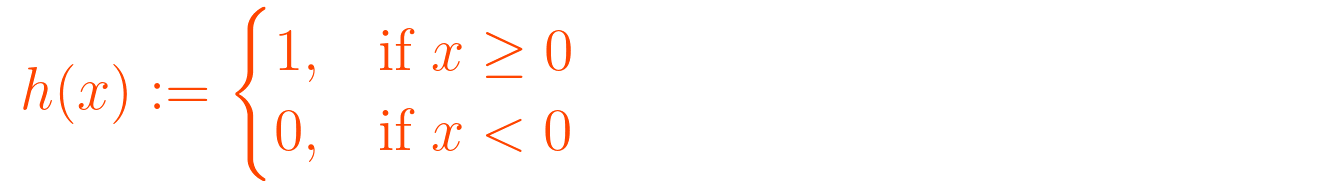

We will also use the Heaviside step function as the activation function:

Now we have all the information gathered that is required.

We can start to calculate:

First sample of Training set

Calculate the output for first sample:

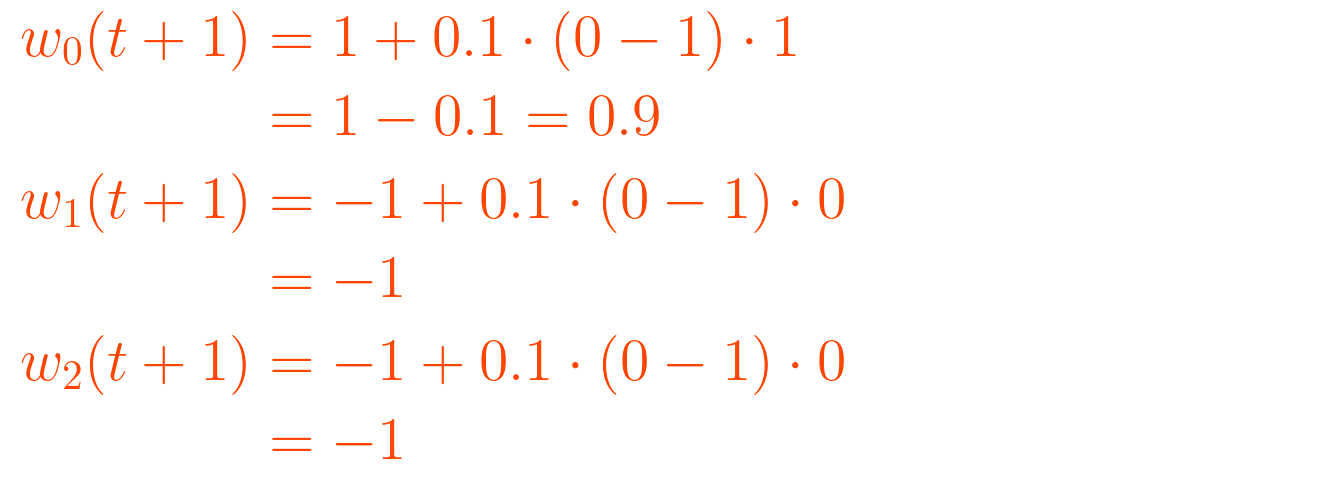

Update the weights, since output is not the desired output:

Updated weights are w = [0.9, -1, -1].

Second sample:

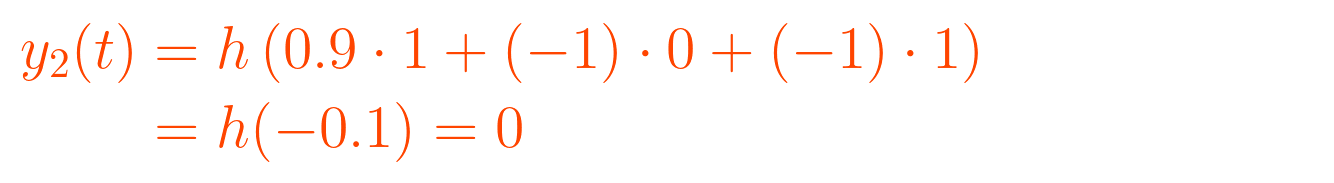

Calculate output:

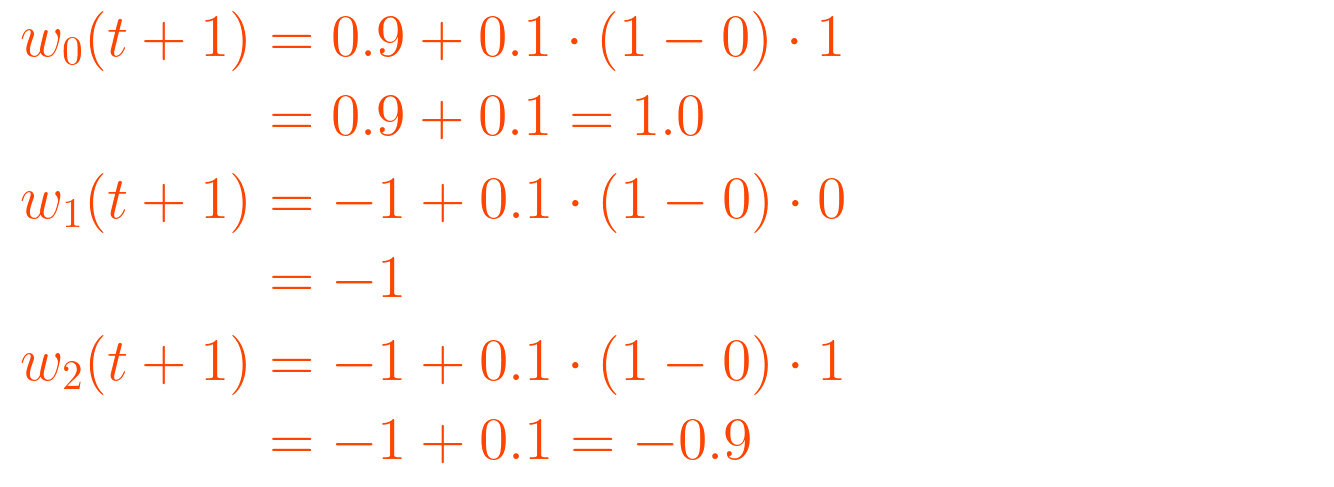

Update the weights, since output is not the desired output:

Updated weights are w = [1.0, -1, -0.9].

Third sample:

Calculate output:

Nothing changes, the output is the desired output.

Therefore we don’t need to calculate weights. It’s possible to calculate them anyway, but it won’t change the weights.

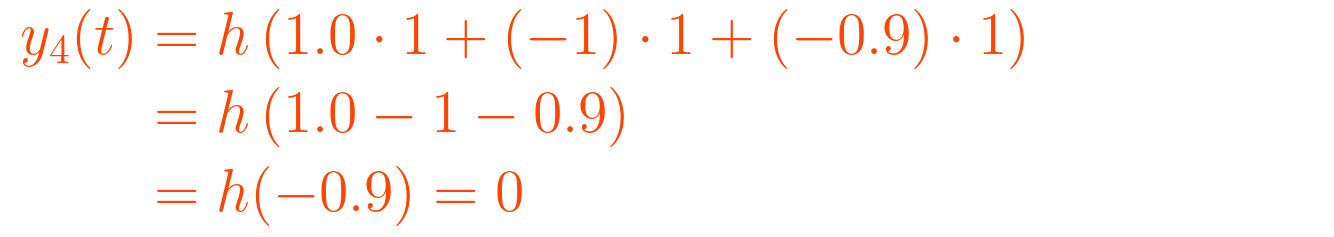

Fourth sample:

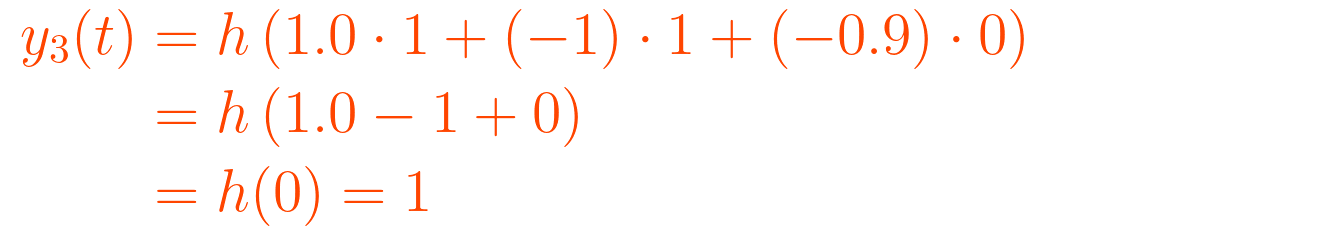

Calculate output:

Update weights, since output is not desired output:

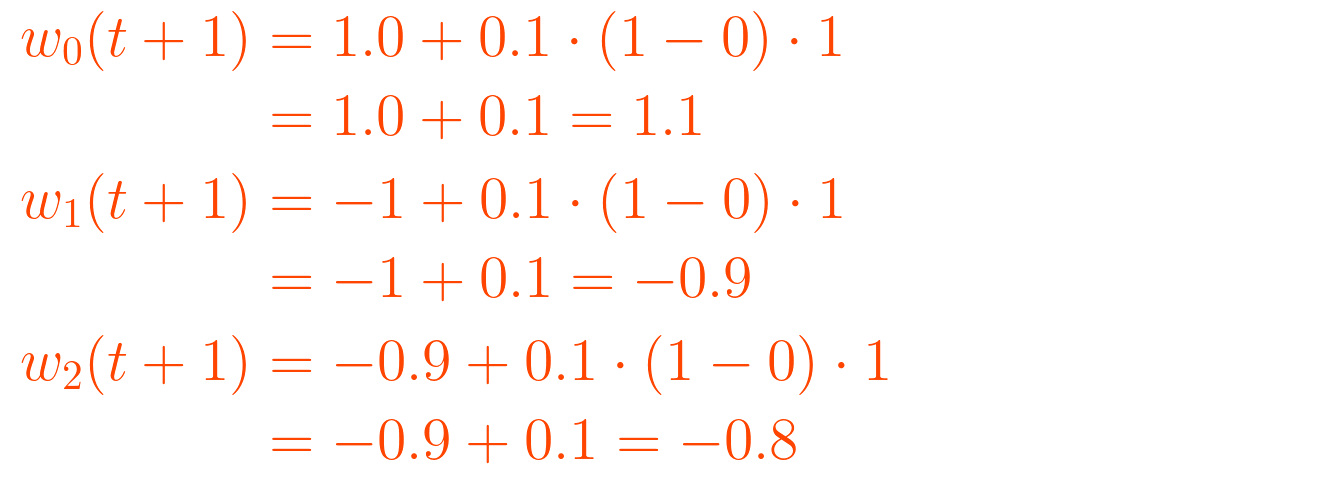

Updated weights are w = (1.1, -0.9, -0.8).

Calculate the error:

The error is 50% and the first epoch is calculated. These steps have to be repeated until error is 0%.

Usually these steps are computed and that is exactly what we will do for the next epochs.

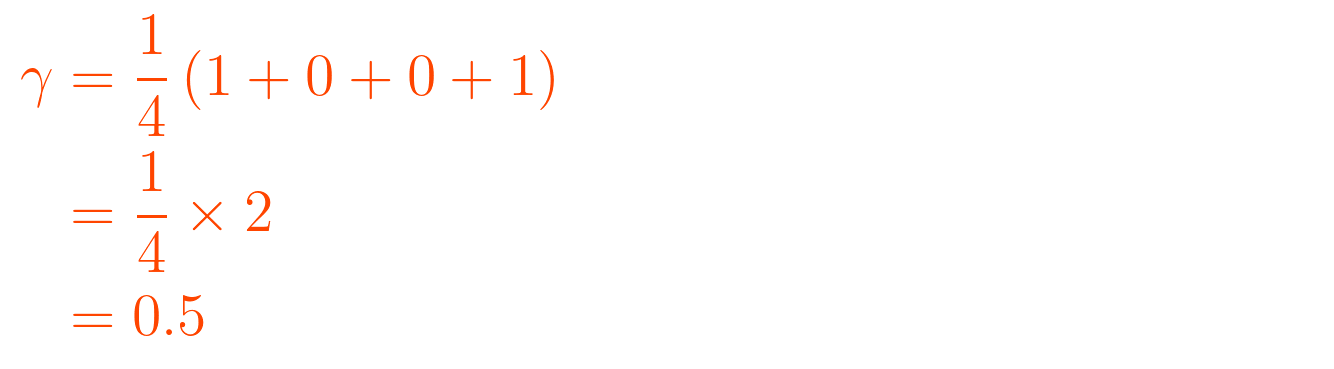

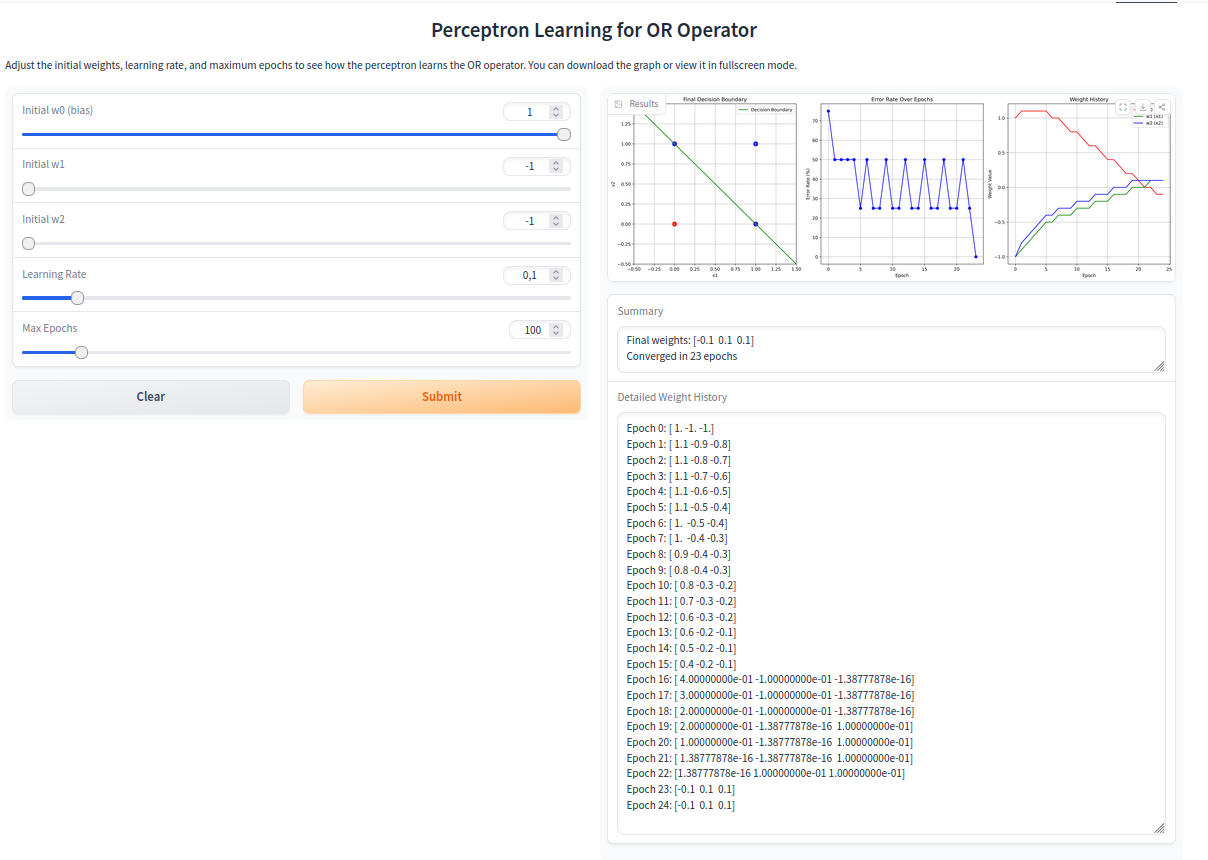

I built an Gradio application with Python that does the work and also visualizes some metrics for us.

Visualization

Here the visualization of the final decision boundary, the error rate over epochs, and the weight history.

Try the Gradio App

Try the app here: Gradio App for the visualization of the learning algorithm of a single-layer perceptron

A screenshot of the app where you can see the weight change over the epochs, the error rate over the epochs, and the final decision border of the perceptron:

![\displaystyle \mathbf{x}_1 = [0, 0] \text{ and } d_1 = 0 \displaystyle \mathbf{x}_1 = [0, 0] \text{ and } d_1 = 0](https://substackcdn.com/image/fetch/$s_!TJdm!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fbf1346e2-2c88-4be2-8377-f935f3bde53e_1334x79.png)

![\displaystyle \mathbf{x}_2 = [0, 1] \text{ and } d_2 = 1 \displaystyle \mathbf{x}_2 = [0, 1] \text{ and } d_2 = 1](https://substackcdn.com/image/fetch/$s_!7SeI!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F9789daaa-f5aa-4aed-a482-77252435ad07_1334x79.png)

![\displaystyle \mathbf{x}_3 = [1, 0] \text{ and } d_3 = 1 \displaystyle \mathbf{x}_3 = [1, 0] \text{ and } d_3 = 1](https://substackcdn.com/image/fetch/$s_!rVAh!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F3eae0604-e4de-4414-a59a-9856cab64add_1334x79.png)

![\displaystyle \mathbf{x}_4 = [1, 1] \text{ and } d_4 = 1 \displaystyle \mathbf{x}_4 = [1, 1] \text{ and } d_4 = 1](https://substackcdn.com/image/fetch/$s_!lc91!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F553f8e2f-e12b-4a6d-8a7e-32a03e7b1d24_1334x79.png)

![\mathbf{w} = \left[ \begin{array}{r} 1 \\ -1 \\ -1 \end{array} \right] \mathbf{w} = \left[ \begin{array}{r} 1 \\ -1 \\ -1 \end{array} \right]](https://substackcdn.com/image/fetch/$s_!WHqs!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fadc5b482-b424-47ef-a9f4-c64bf5749800_1334x265.png)

![\displaystyle y_1(t) = 0 \text{ for } \mathbf{x}_1 = [0, 0] \displaystyle y_1(t) = 0 \text{ for } \mathbf{x}_1 = [0, 0]](https://substackcdn.com/image/fetch/$s_!M07k!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F835f194e-72b7-4c20-9183-e0e61d50326d_1334x79.png)

![\displaystyle y_2(t) = 1 \text{ for } \mathbf{x}_2 = [0, 1] \displaystyle y_2(t) = 1 \text{ for } \mathbf{x}_2 = [0, 1]](https://substackcdn.com/image/fetch/$s_!PW9G!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fb9a7cd10-3153-46b5-9325-e612d7061b87_1334x79.png)

![\displaystyle y_4(t) = 0 \text{ for } \mathbf{x}_4 = [1, 1] \displaystyle y_4(t) = 0 \text{ for } \mathbf{x}_4 = [1, 1]](https://substackcdn.com/image/fetch/$s_!CvpM!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fa234f1ea-9b44-4658-b2ca-fbc7bf9a60f5_1334x79.png)

![{\displaystyle {\begin{aligned}y_{j}(t)&=h[\mathbf {w} (t)\cdot \mathbf {x} _{j}]\\&=h[w_{0}(t)x_{j,0}+w_{1}(t)x_{j,1}+w_{2}(t)x_{j,2}+\dotsb +w_{n}(t)x_{j,n}]\end{aligned}}} {\displaystyle {\begin{aligned}y_{j}(t)&=h[\mathbf {w} (t)\cdot \mathbf {x} _{j}]\\&=h[w_{0}(t)x_{j,0}+w_{1}(t)x_{j,1}+w_{2}(t)x_{j,2}+\dotsb +w_{n}(t)x_{j,n}]\end{aligned}}}](https://substackcdn.com/image/fetch/$s_!7167!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fb9661c4e-edb7-46c4-9754-21f08a09d964_1334x189.png)

![{\displaystyle {\begin{aligned} y_{1}(t) &= h\left[1 \cdot 1 + (-1) \cdot 0 + (-1) \cdot 0\right] \\ &= h[1] = 1 \end{aligned}}} {\displaystyle {\begin{aligned} y_{1}(t) &= h\left[1 \cdot 1 + (-1) \cdot 0 + (-1) \cdot 0\right] \\ &= h[1] = 1 \end{aligned}}}](https://substackcdn.com/image/fetch/$s_!5D5I!,w_1456,c_limit,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fba98737d-f737-4e89-bb8f-9bed96d2597d_1334x183.png)